Colada

Team: Will Ruby, Rachel Ariavatkul, Amanda Matzenbach

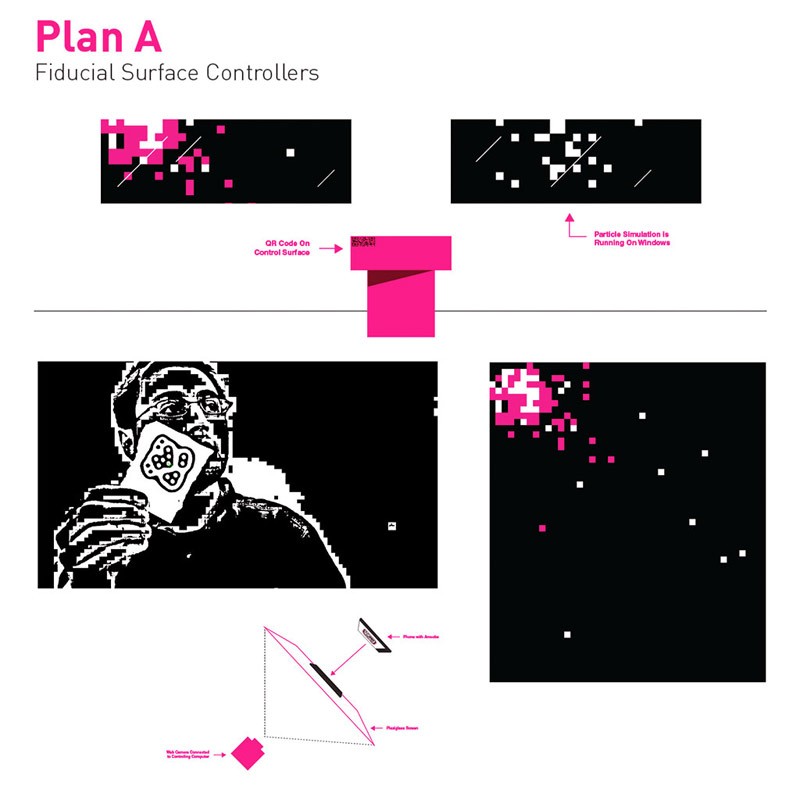

This team was interested in creating a kind of collaborative visualizer with the mobile device as controller. Initially, research was focused on creating an interaction using the fidiucial markers used by the Reactivision project. This prototype allowed the user to scan the QR, which would link to a URL that would display a graphic marker on the user’s mobile device, which could then be placed on a transparent surface in front of a camera. Using computer vision, the system could then track orientation of the mobile device, and allow the user to interact with the projected visuals.

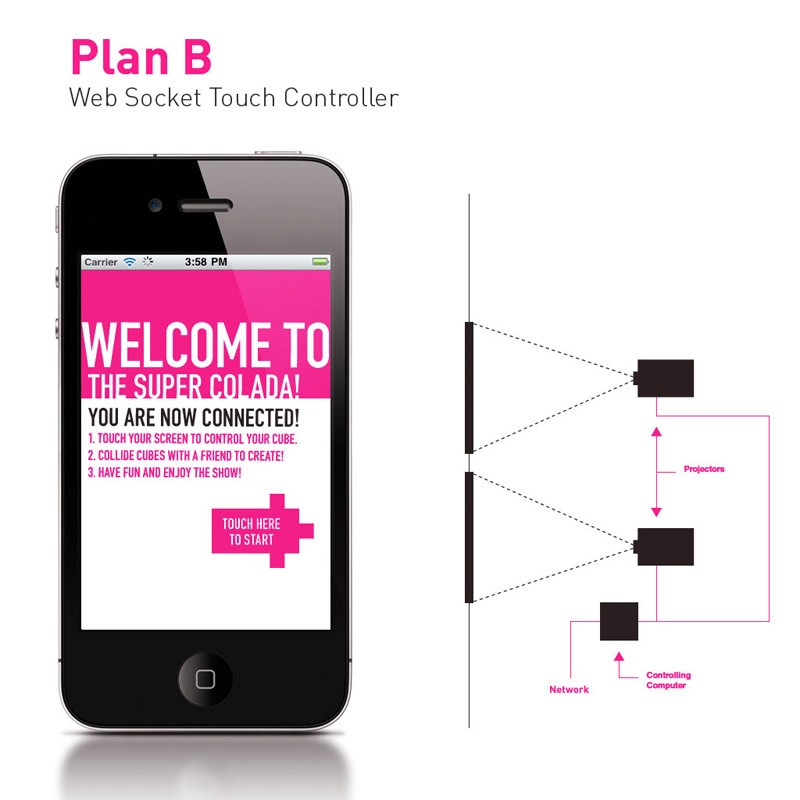

While this solution was both novel and functional, the speed of the interaction was limited by the frame rate of the camera/tracking software, and in testing it seemed that the interaction might be difficult for users to adapt to in a short time frame. The final prototype instead used a web application with a touch interface, which proved to be both immediately responsive and intuitive for the user.

Several iterations of the visual interaction were prototyped; some more game-like, some more abstract. The final implementation is a physics-driven system of generative visuals. When users’ markers collide on screen, persistent geometry is generated based on velocity and proximity, encouraging multi-user collaboration.

SEO Colada Documentation from Will Ruby on Vimeo.